0.0 Executive Summary

This report explains how computers store and process data using binary systems, character encoding, and logic gates.

The goal was to understand how data moves from simple 0s and 1s into readable text, numbers, and images. This helps prevent mistakes when working with system logs, network data, and security investigations.

By learning how binary conversion and encoding work, this report reduces the risk of misreading data or making errors during troubleshooting or incident response.

The result is a clear understanding of how computers handle data at a low level, which improves accuracy when analyzing systems.

1.0 Binary Systems and Logic Gate Analysis

1.1 Project Description

The goal of this task was to understand how computers process data so errors can be avoided during system analysis and troubleshooting.

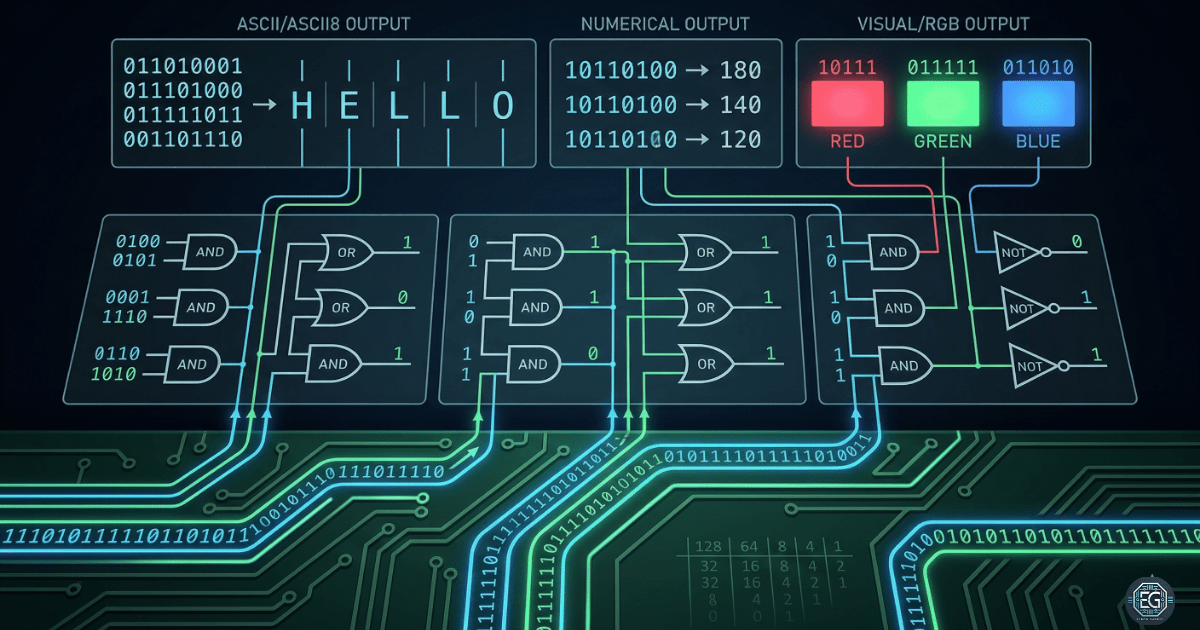

The process focused on three main areas:

- Binary conversion to ensure data is translated correctly

- Character encoding (ASCII/UTF-8) to understand how text is stored

- Logic gates to explain how computers make decisions

This ensures that tasks like log analysis and packet inspection are based on correct data interpretation.

1.2 Technical Task / Troubleshooting Process

The task focused on how computers use simple electrical states (1s and 0s) to represent complex data.

Key Actions & Observations

-

Binary-to-Decimal Conversion:

Used a weighted bit table (128–1) to convert binary values into decimal numbers. - Data Representation Auditing:

- Mapped binary values to characters using ASCII

- Reviewed how UTF-8 supports more characters and languages

- Logic Gate Modeling:

- Studied AND, OR, and NOT gates

- Observed how they control decision-making in hardware

- Refinement (RRF):

A single bit error can change the entire output. This shows why accurate data handling is critical.

Root Cause:

Most data errors come from misunderstanding how binary and encoding work. This was fixed by using a clear and repeatable method for converting and validating data.

1.3 Resolution and Validation

The system was validated by testing binary conversion and logic operations.

| Parameter | Configuration Value |

|---|---|

| Management Tool | Weighted Bit Table / Truth Tables |

| System Mode | Low-Level Data Analysis |

| Encoding Standard | ASCII / UTF-8 |

| Scope | Foundational Computing Principles |

Validation Steps

- Converted binary

10011101to decimal157 - Converted decimal

87to binary01010111 - Verified logic gate behavior using truth tables

- Confirmed results matched ASCII character outputs

2.0: CONCLUSION

2.1 Key Takeaways

- Computers operate using binary (0s and 1s)

- Accurate data handling starts at the bit level

- Encoding systems like UTF-8 allow readable text

- Logic gates control how computers make decisions

2.2 Security Implications and Recommendations

Risk: Forensic Misinterpretation

Incorrectly decoding binary data can lead to wrong conclusions during an investigation.

Mitigation: Use consistent conversion methods and verify results with reverse calculations.

Risk: Encoding Mismatches and Injection

Different encoding formats can cause errors or security issues.

Mitigation: Standardize encoding (UTF-8) and validate all inputs.

Best Practices

- Strengthen understanding of bitwise operations

- Use consistent encoding formats (UTF-8)

- Validate system logs for accuracy

- Document all conversion steps

Framework Alignment

- Aligns with NIST Cybersecurity Framework (Identify and Protect)

- Supports CIS Controls for data protection

- Helps maintain system integrity and audit accuracy